Introduction

In this tutorial we explore how we can connect

OpenAI’s Chat Completions API to external services through function calling. This capability allows the model to generate JSON objects that can serve as instructions to call external functions based on user inputs.

AI gives you superpowers, it’s no longer just for chatting. Now, it can take real-world actions like sending emails, fetching live data, or updating databases. Explore how to set up structured functions, validate parameters, and handle responses to build dynamic AI applications that do more than just respond. Get ready to turn your chatbot into an active problem-solver that seamlessly connects with your entire tech setup.

Understanding Function Calling in the Chat Completions API

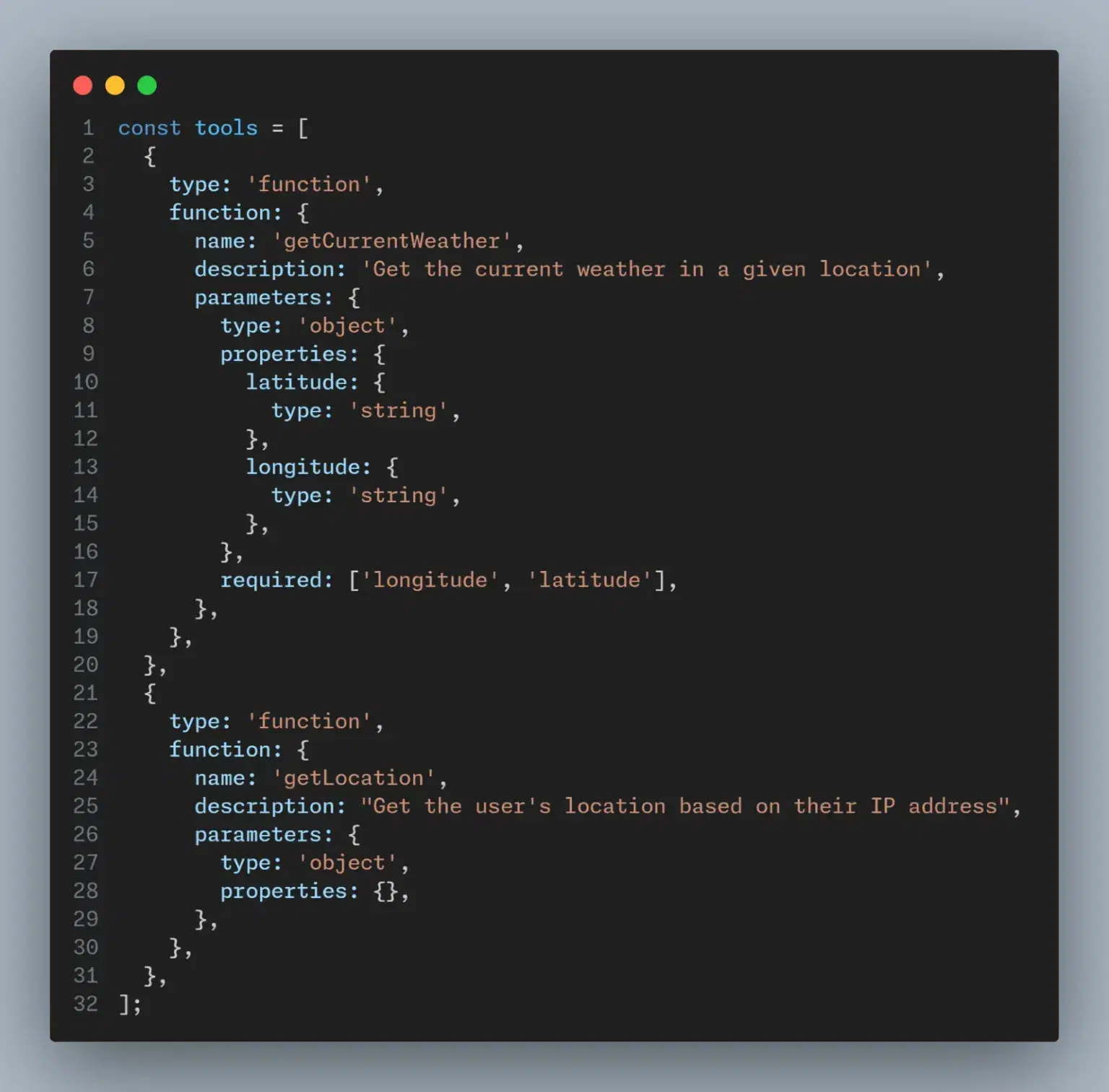

The Chat completion API provides us with an optional parameter “tools” that we can use to provide function definitions. The LLM will then select function arguments which match the provided function specifications.

One important thing to understand is that

OpenAI doesn’t call any function. It simply returns the function definition, and the developer can then execute that function.

1. Optional “Tool” Parameter:

2. LLM Function Selection:

3. No Automatic Function Execution:

4. Developer Responsibility:

Common Use Cases

The completion response is computed and after that it is returned.

1. Make calls to external APIs: Use tools to make calls to external api. Build agents that can understand and perform external tasks by defining functions. For example: generating user location or fetching pending tasks for this week:

get_pending_tasks(type: ‘week|month|quarter’)

get_current_location()

send_friend_request(userId) ·

2. Connect to SQL Database: Using Natural language, define functions that can make request to database queries.

get_data_from_db(query)

How It Works

Integrating LLMs with external tools involves a few straightforward steps:

1. Define Functions:

Define Functions

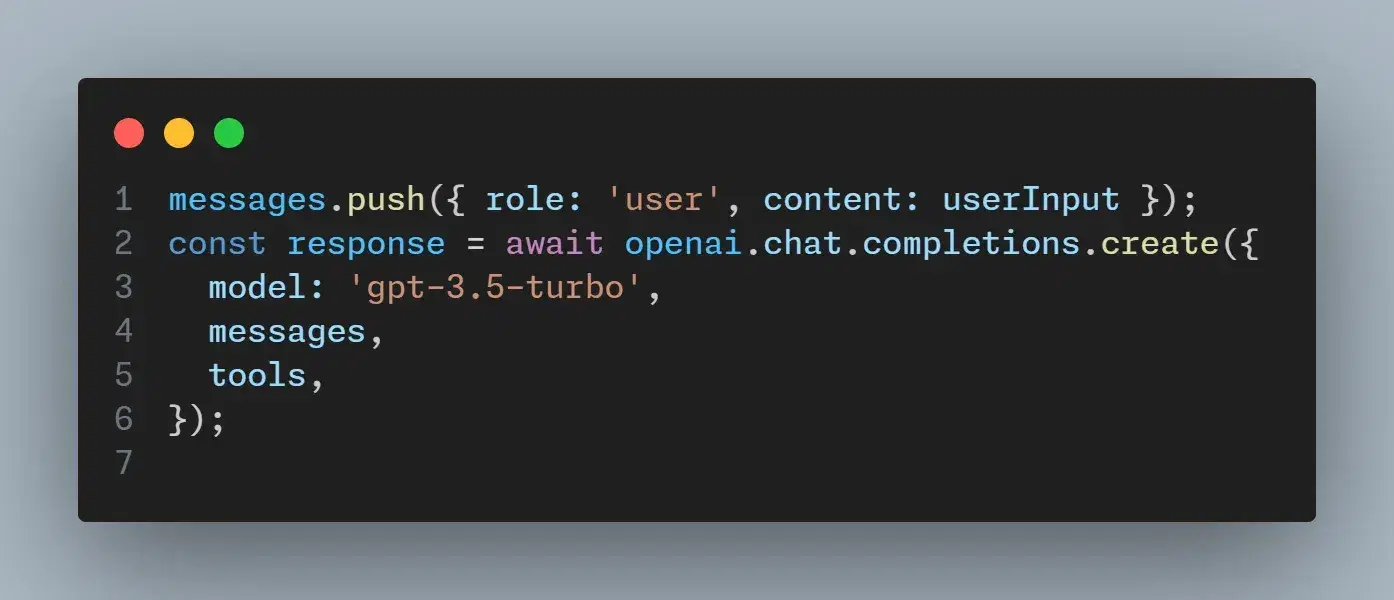

2. Call the Model:

3. Handle the Output:

The JSON output from the model will contain the necessary details to call the external function. This may include function names and argument values.

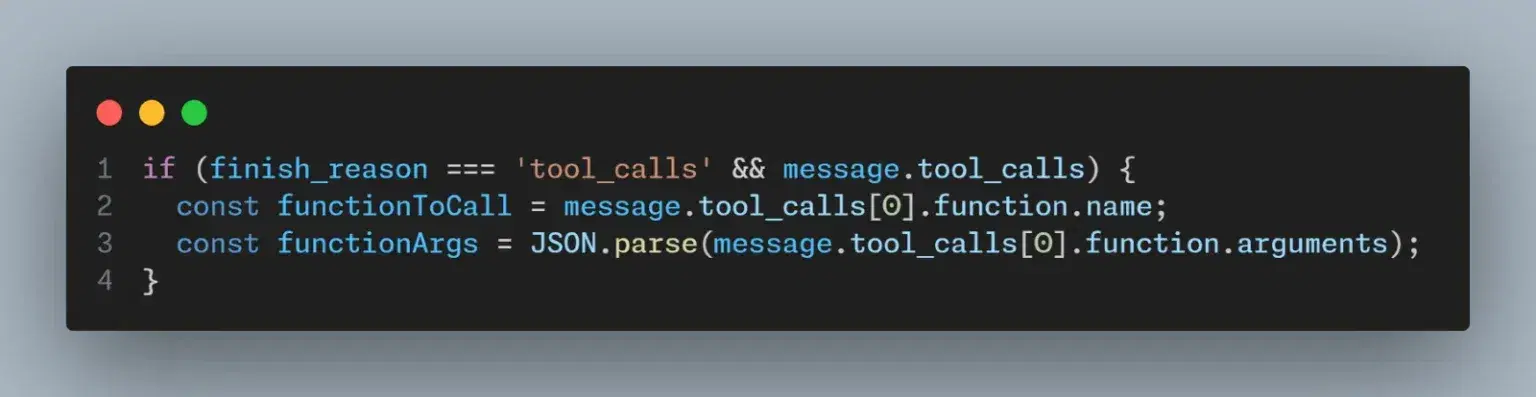

If a tool was called, the “finish_reason“ in the ocmpletion respnse will be equal to “tool_calls“. Also, there will be a “tool_calls” object that will contain the name of tool as well as any arguments.

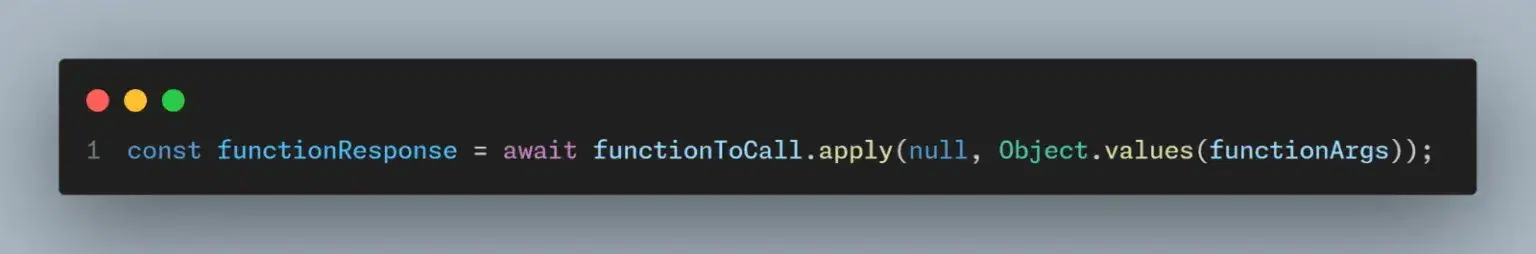

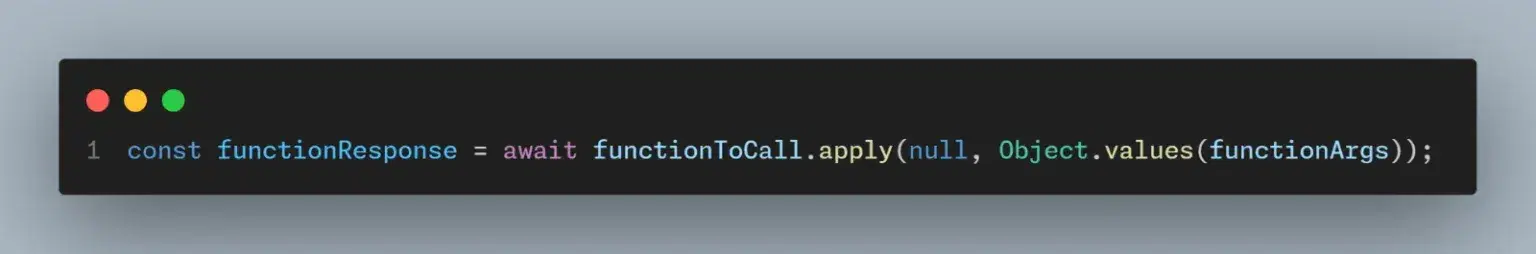

4. Execute Functions:

5. Process and Respond:

After executing the function, you might need to call the model again with the results to generate a user-friendly summary or further instructions.

Conclusion

Integrating OpenAI’s Chat Completions API with external tools improves the functional capabilities of applications. By defining functions and utilizing the ‘tool’ parameter, developers can create interactions that use real-time data and actions to increase the responsiveness and versatility of their software.

Ready to improve your business operations by innovating Data into conversations? Click here to see how Data Outlook can help you automate your processes.